Microsoft has announced the general availability of Language Understanding service (LUIS) and Azure Bot Service, Artificial Intelligence services designed to allow developers create apps that see, hear, speak, understand, and interpret users’ needs with the help of natural communication styles and methods.

The company wants to set a stage for all developers, regardless of their expertise, to be in position to build conversational AI that can boost the capacity of applications to audiences across various conversational channels. This comes with the ability to internalize natural language, reason about content and take intelligent actions.

What is Azure Bot Service?

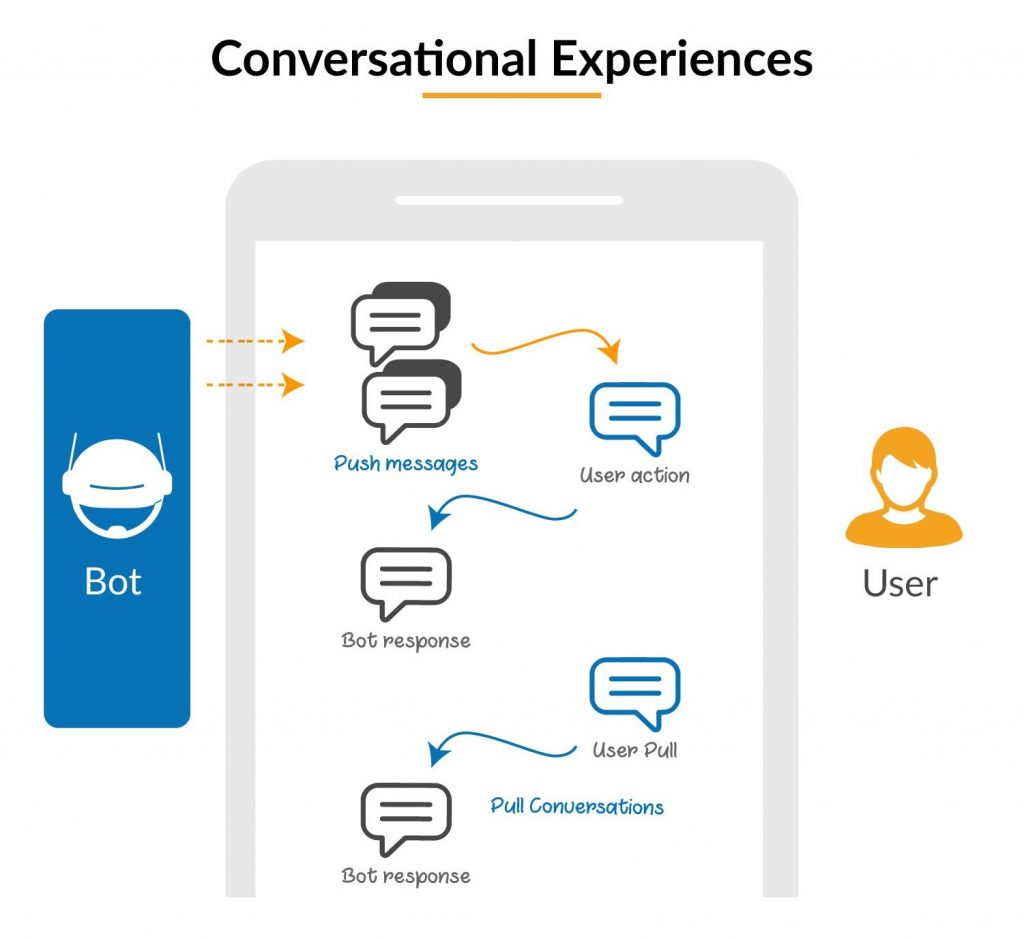

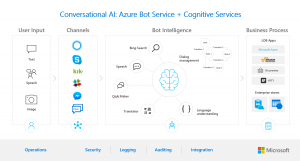

We know that bots do provide the conversational interface that accepts user input in different modalities including text, speech, cards, and images. In line to this, Azure Bot Service provides a scalable, integrated bot development and hosting environment for conversational bots that can reach customers across multiple channels on any device.

The Bot Service offers fourteen channels to interact with users with intelligence enabled in it through cloud AI services, forming the bot brain that understands and reasons about the user input. Based on understanding the input, the bot can help the user complete some tasks, answer questions, or even chit chat through action handlers.

What does Language Understanding do?

Language Understanding allows the bot to understand natural language input and reason about it to take the appropriate action. It helps a developer in building custom models for your business vertical with little effort and without prior expertise in data science. Designed to identify valuable information in conversations, it interprets a user’s goals and distills valuable information from sentences, for a high quality, nuanced language model.

In a blog post, Microsoft stated that, “Developers can connect to other Azure services to enrich their bots as well as add Cognitive Services to enable your bots to see, hear, interpret, and interact in more human ways. For example, on top of language, the Computer Vision and Face APIs can enable bots to understand images and faces passed to the bot.”

Discover more from Dignited

Subscribe to get the latest posts sent to your email.