Unless you’re sitting in complete darkness, I’d like for you to do me a favor. Take a look around the room you’re in and notice a spot on the wall. Then pretend to draw a straight line towards that spot from your eyes. Now follow that line from the spot on the angle it would take towards the source of light in the room. Congratulations, you’ve just done the same thing that happens in Ray Tracing.

Ray tracing is a graphics rendering technique that’s been in the tech news quite a bit. Precisely on the heels of the launch of Nvidia’s Turing family of GPUs. These GPUs tout real-time ray tracing as a way to get better-looking games.

So what exactly is Ray Tracing? Well, to understand the concept of Ray Tracing, it helps to know why it’s considered a step up. That is from the traditional method by which games draw or render scenes onto your screen.

Also Read: PC vs Console Gaming: Pros and Cons For Each

Rasterization

Most (current) games use a technique called Rasterization. This is where the game code will direct your GPU to draw a 3D scene with polygons. These 2D shapes are usually triangular in shape and makeup the majority of the visual elements you get to see. The next thing after a scene is drawn is translating or rasterizing it into individual pixels.

The pixels are then processed by a shader. The shader then affects color textures and lighting effects on a per-pixel basis to give you a fully rendered frame. Then this happens 30 or 60 times every second and you’ve got yourself a fully responsive video game.

Also Read: Some of the Best gaming Laptops for 2019

Limitations of Rasterization

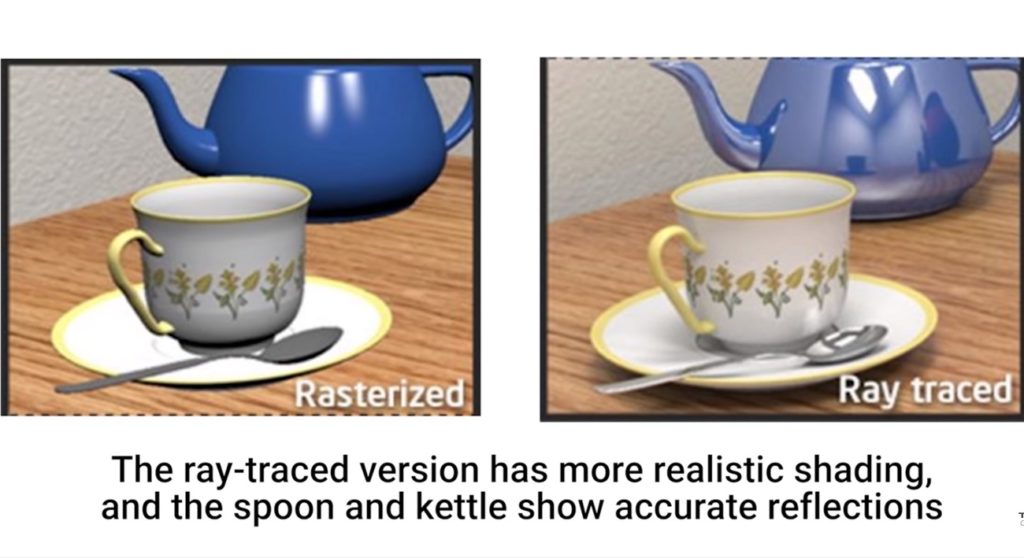

Though rasterization has served us well for a long time, it has not been short of limitations. Rasterization tries to approximate an image by translating 3D shapes onto a 2D screen. And then uses shaders to estimate what the lighting should be.

That modus operandi of rendering has difficulty tracking exactly how light should travel and bounce within a certain scene. That is where Ray Tracing comes in as it does a much better job of simulating bouncing of light. You may have actually probably been enjoying it without knowing it for years. That is if you’ve watched any movie that features Computer Generated Imagery (CGI) effects.

Also Read: Opera’s new Gaming Browser lets you control RAM and CPU Usage

Ray Tracing in Movies

What made Ray Tracing possible in movies though? It should be obvious that big-budget production companies have deep pockets to bankroll rendering of the effects on large server farms. This is a process that can take many months for a single movie to be rendered, especially high-quality animations.

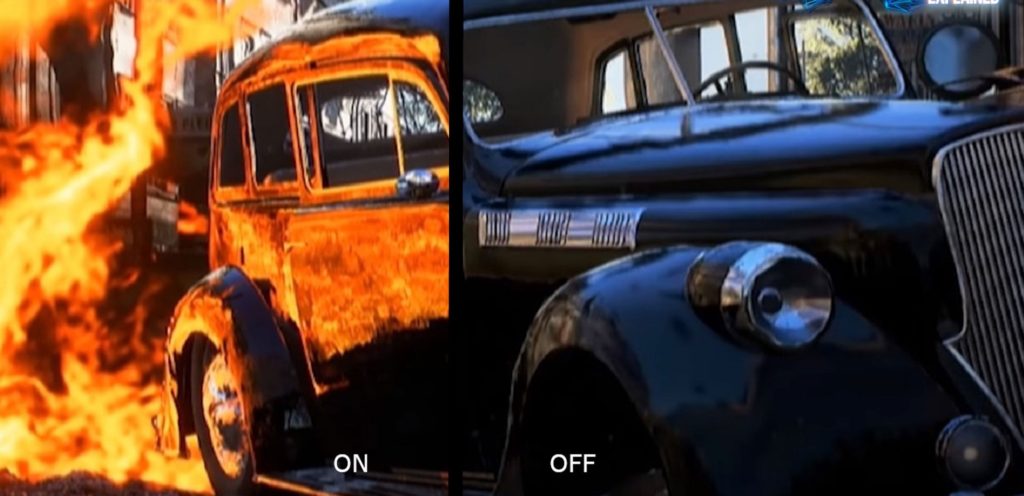

It also uses much more computationally intensive techniques with many bounces for each photon and a huge number of light rays coming from each source. Just check out the still life above created with a Ray Tracing program. You would be forgiven for thinking it’s a real photograph at first glance.

So Ray Tracing is amazing, right? Why not use it for everything then? The downside of Ray Tracing is the computational cost. The average 20-year-old gaming at home doesn’t have millions of dollars or a rendering server. And on top of that, games have to be rendered at least 25 or so frames per second. Not one frame per day as has been the case in some Pixar films.

Also Read: The difference between Graphics Card and a GPU

Consumer Grade Ray Tracing

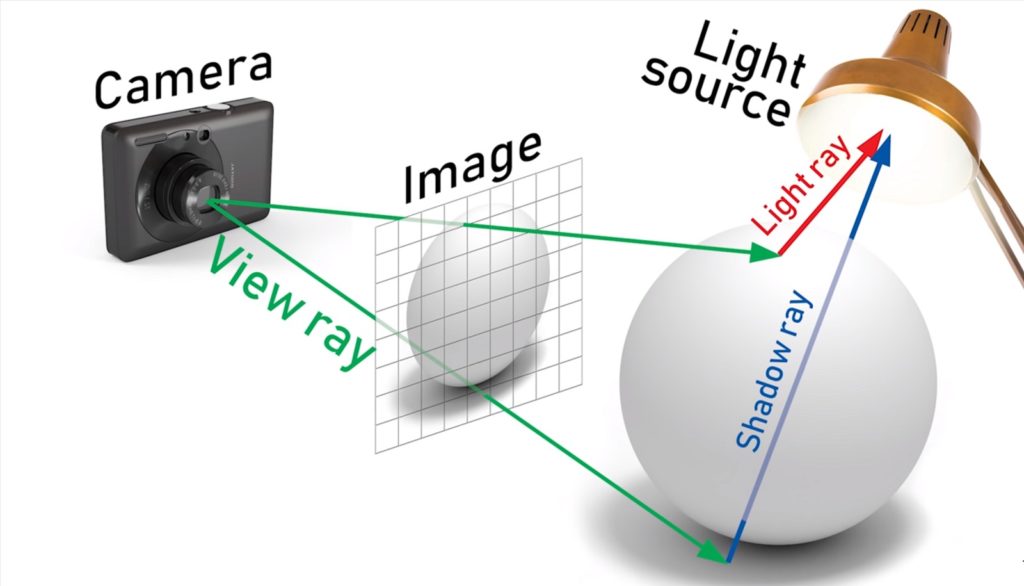

Consumer-grade ray tracing does not track how many trillions of rays that come from each light source. It instead lessens the computational load by tracing a path from a virtual camera. The camera represents the user’s eyes through a single Pixel then to whatever object is behind that pixel and finally back to the in-scene light source.

For added realism, if whatever that ray bounced off of absorbs or diffuses the light, like a rough rock or a tree trunk, the ray tracing algorithm can take these additional rays of light into account as well. So that any refractions that affect the shadows are displayed accurately.

Lighting is such an important aspect of achieving a convincing 3D render. And because of that, once this process is completed, for each pixel, your GPU can throw some insanely detailed images. Just like most GPU features, its adoption will come down to industry support. For starters, Microsoft has incorporated it in their latest DirectX 12 Ultimate.

Do share your thoughts and opinions with us about this piece of technology in the comments section.

Also Read: Google Launches Game Streaming Platform: Google Stadia

Discover more from Dignited

Subscribe to get the latest posts sent to your email.